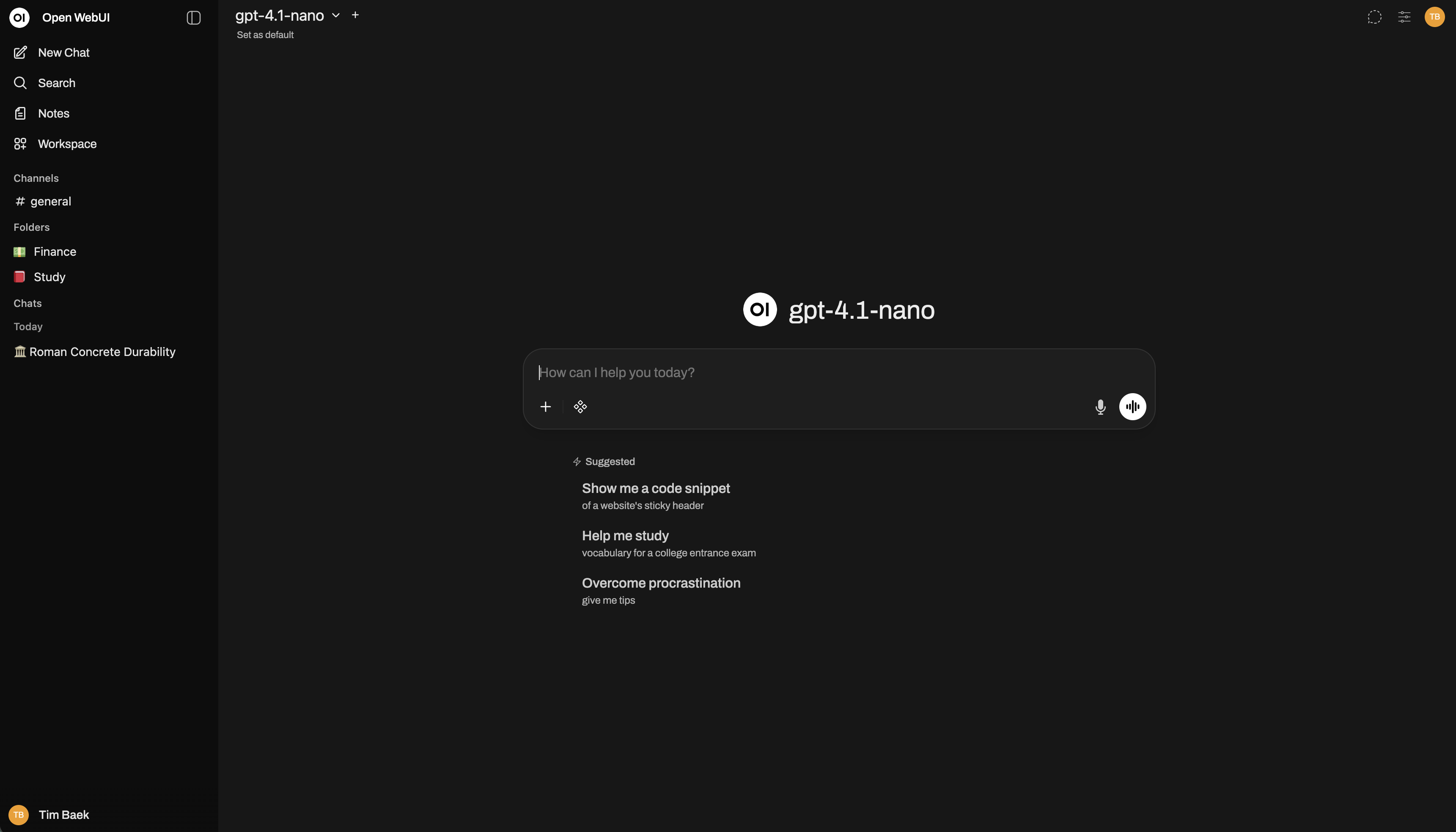

Open WebUI

Open WebUI is an extensible, feature-rich, and user-friendly self-hosted AI platform designed to operate entirely offline. It supports Ollama and OpenAI-compatible APIs, making it a powerful, provider-agnostic solution for both local and cloud-based models.

Quick Start

- Docker

- pip

- uv

- Desktop

docker run -d -p 3000:8080 --add-host=host.docker.internal:host-gateway -v open-webui:/app/backend/data --name open-webui --restart always ghcr.io/open-webui/open-webui:mainThen open http://localhost:3000.

For GPU support, Docker Compose, and more → Full Docker guide

pip install open-webui

open-webui serveThen open http://localhost:8080.

curl -LsSf https://astral.sh/uv/install.sh | sh

DATA_DIR=~/.open-webui uvx --python 3.11 open-webui@latest serveThen open http://localhost:8080.

Download the desktop app from github.com/open-webui/desktop. It runs Open WebUI natively on your system without Docker or manual setup.

The desktop app is a work in progress and is not yet stable. For production use, install via Docker or Python.

Installed Open WebUI but not sure where to start? The Essentials for Open WebUI guide covers the six things every new user needs to know: plugins, tool calling, task models, context management, RAG, and Open Terminal.

Getting Started

- Quick Start — Docker, Python, Kubernetes install options

- Connect a Provider — Ollama, OpenAI, Anthropic, vLLM, and more

- Essentials for Open WebUI - Start here after your first install. Plugins, tool calling, task models, context management, RAG, Open Terminal.

- Connect an Agent — Hermes Agent, OpenClaw, and other autonomous AI agents

- Updating — Keep your instance current

- Development Branch — Help test the latest changes before stable release

- Advanced Topics — Scaling, logging, and advanced configuration

Explore

- Features — Discover what Open WebUI can do

- Tutorials — Step-by-step guides

- FAQ — Common questions answered

- Troubleshooting — Fix common issues

- Reference — Environment variables and API details

Enterprise

Need custom branding, SLA support, or Long-Term Support (LTS) versions? → Learn about Enterprise plans

Get Involved

- Contributing — Help build Open WebUI

- Development Setup — Run the project locally from source

- Discord — Join the community

- GitHub — Report issues, submit PRs

- Careers — Join our team